Confidential Coordination I: Beyond Privacy as Shield

This essay is the first in a series on confidential coordination: producing shared outcomes from private inputs without data exposure or single-operator control. The Interfold is the distributed network that implements it.

Markets now clear in milliseconds without human intervention. Credit is extended or denied by models trained on data no applicant will ever see. Treatment pathways are optimized by systems trained on patient histories that no individual clinician can fully inspect.

Increasingly, the decisions that shape economic and political reality are not made solely by people debating in rooms. They are processed through computational systems.

The infrastructure that once mediated communication now increasingly mediates outcomes. And when independent parties must combine private inputs to produce those shared outcomes, execution becomes a question of authority: who can see, who can decide, and who is positioned to benefit.

Privacy once secured the boundaries of participation. But when participation itself becomes computation, the privacy problem shifts from access alone to execution.

We call this category confidential coordination: situations where independent actors must produce a shared outcome from private inputs without pooling data or surrendering execution to a single operator. The Interfold is the distributed network we are building to make this model durable through system design rather than institutional trust.

Privacy as Shield Is Insufficient

For much of the internet era, privacy was treated primarily as access control:

A filter you apply.

A setting you toggle.

A boundary around what is yours.

That framing reflected the architecture of the time. Digital systems were built for publication and broadcast. Information moved outward by default, splayed onto websites, into feeds, across platforms.

As long as digital coordination primarily meant exchanging information rather than producing shared computational outcomes, this architecture held. Aggregation — whether financial, medical, or electoral — could occur within trusted institutional authorities without destabilizing the broader coordination model.

But as coordination becomes less conversational and more computational, the stakes change.

- How can voters cast ballots without fear that someone, somewhere in the stack, can reconstruct how they voted?

- How can bidders compete without revealing their hand before the auction closes?

- How can institutions generate shared insight when the required data is siloed by HIPAA, contracts, and jurisdiction?

When coordination fails here, it is not abstract. It means cancer trials slowed by legal walls, markets tilted by asymmetric insight, and collective decisions degraded because participants cannot trust the counting process. Over time, that erosion compounds.

Privacy becomes durable only when it governs execution, not just access.

The moment multiple parties combine inputs to produce a shared result — to price risk, to model a pandemic, to allocate capital — the old privacy boundary begins to fail. Coordination becomes a liability: either the data becomes visible somewhere in execution, or it is entrusted to an intermediary who sees on everyone’s behalf.

And that intermediary does not just see the result. It sees the game before the outcome. It sees strategy, edge cases, failed bids, partial signals: the raw material of advantage.

That tradeoff made sense in a world built around trusted custodians, but it’s untenable in a world where institutions cannot legally merge records, share strategy, or surrender sensitive data to a single authority.

The problem is not who can access the data at rest, but who must see it in order for coordination to even happen.

The Coordination Trilemma

The constraint emerges from the mechanics of computation itself.

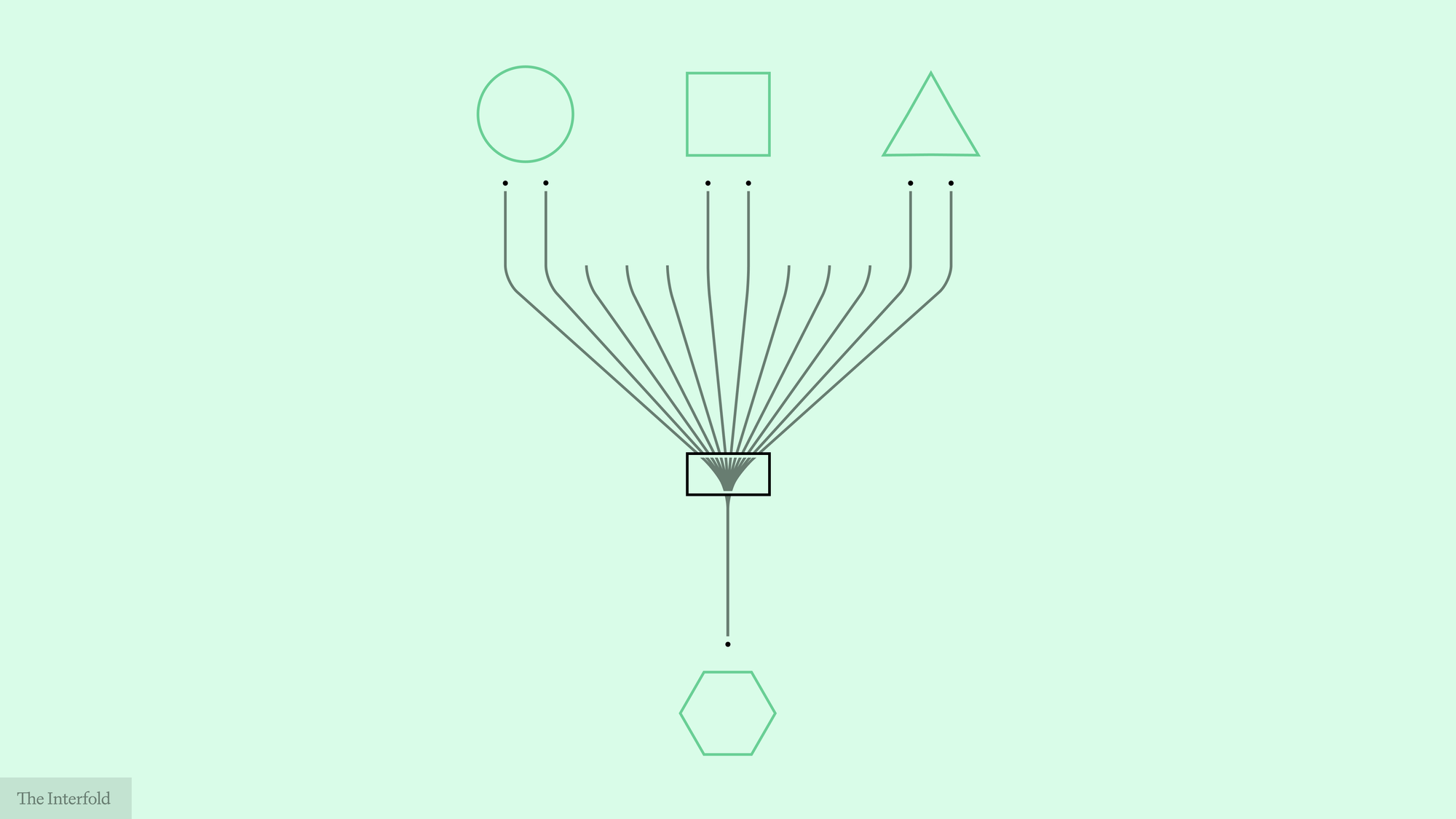

Any system that combines inputs from multiple independent parties must simultaneously address these properties:

- Confidentiality

- Aggregation

- Verifiability

This can be understood as a coordination trilemma: a recurring architectural constraint in which confidentiality, aggregation, and verifiability have historically been difficult to achieve together without concentrating trust.

In practice, most architectures have been better at delivering two of these properties than all three at once.

Confidentiality + Aggregation

Private inputs combine into a shared result

→ Verification or release depends on a trusted party

Aggregation + Verifiability

Outcomes can be audited and relied upon

→ Inputs or intermediate state become more exposed

Confidentiality + Verifiability

Inputs remain protected and correctness can be proven

→ Computation becomes narrower, more costly, or requires stronger coordination

Custody was not merely a governance choice. It followed from how available computation had to be organized. We centralized execution not out of preference, but because the available mechanics of computation made privileged visibility difficult to avoid.

The point of failure was not access alone, but execution: who must see inputs in order for aggregation to occur, and who controls the release of outcomes.

Finally understood the issue of making Ethereum a privacy chain from @auryn_macmillan

— Devansh Mehta (@devanshmehta) March 31, 2026

There's a trilemma where we can only choose 2 from shared state, privacy & verifiability

Eth has chosen shared state and verifiable, which makes privacy very difficult to integrate pic.twitter.com/j2amRKSbDN

Consider a secret ballot. A vote is not merely data to aggregate. It is political agency exercised under the promise of secrecy. Yet to produce a tally, systems historically tended to rely on some actor or mechanism entrusted to see, count, or certify on behalf of others. If secrecy is preserved, trust shifts to the counter. If transparency is maximized, exposure to collusion and coercion becomes possible.

The tradeoff is mechanical, not moral.

For decades, institutions absorbed the burden of this constraint through trust. They did not merely hold power; prevailing architecture often made them the actors responsible for reconciling confidentiality, aggregation, and verification.

Why Execution Centralized

As a result, custodial execution became the default architecture of digital coordination, with aggregation environments acting as centers of authority.

Markets cleared through exchanges.

Data accumulated within analytics providers.

Sensitive records concentrated in credit bureaus.

Even hardware security modules (HSMs) and trusted execution environments (TEEs) preserved the same topology: computation still occurred inside privileged enclaves. Exposure changed shape, but the trust boundary was relocated rather than removed.

Control over execution often conferred informational advantage — not necessarily through malice, but through structural position within the system. And wherever informational asymmetry is structural, decision-making power consolidates around it, shaping how shared economic and political reality is constructed.

Coordination created value, but architecture determined who captured it (and who did not).

This pattern held even as other layers of digital infrastructure were disintermediated:

- The internet redistributed authority over publication and narrative.

- Blockchains redistributed authority over state transition and economic finality.

In each case, a coordination function opened up and was made more accessible.

But execution did not follow the same path. Even as communication and settlement became more distributed, execution remained structurally centralized. Computation still required a locus of control, and even when inputs were encrypted, runtime authority remained concentrated inside aggregation environments.

Execution Beyond Custodial Visibility

This constraint does not disappear because institutions evolve. It loosens when the mechanics of computation evolve.

Advances in programmable cryptography alter the mechanics of aggregation itself. Different mechanisms now make different parts of the problem tractable: some support computation over encrypted state, some verify correctness without revealing underlying inputs, and some distribute execution authority across independent participants. These include MPC, FHE where applicable, and ZKP systems.

Aggregation no longer has to presuppose exposure. What looked like an institutional necessity turns out to be a technical artifact.

Execution reorganizes from discretionary custody to collectively enforced computation.

Disintermediating execution does not eliminate institutions. It removes the structural requirement that execution centralize visibility.

The point is not to pretend trust disappears, but to prevent visibility, execution, and outcome release from collapsing into a single discretionary authority. And when privileged visibility is no longer required for computation, custodial execution no longer has to be the default architecture.

The center does not disappear. Its function changes. Authority is redistributed, constrained, and made explicit through the structure of computation itself.

The New Coordination Layer

When execution is decoupled from custodial visibility, entire categories of coordination can change.

Collective decisions can be computed without transferring custody of individual inputs.

- Secret ballots can be tallied without exposing how anyone voted.

- Committee rankings can be aggregated without revealing individual preferences.

- Grant allocations can be computed without disclosing internal scoring.

Competitive markets can clear without granting operators structural visibility into strategy.

- Sealed-bid auctions can determine a winner without revealing bid strategy.

- Liquidity commitments can be matched without exposing edge conditions.

- Pricing mechanisms can execute without making operator visibility a structural advantage.

Cross-institutional modeling can occur without collapsing institutional boundaries.

- Hospitals can compute aggregate outcomes without merging patient records.

- Financial institutions can model systemic risk without exposing portfolios.

- Collaborative AI systems can train across distributed proprietary datasets without central data capture.

The incentives change. Markets can reorganize around verifiable fairness rather than structural visibility. Research networks can move toward encrypted collaboration instead of data pooling. Multi-agent systems need not rely on centralized orchestration by default.

Authority can shift from discretionary custody to protocol-enforced guarantees. The result is not opacity, but a more symmetrical distribution of authority. Custodial concentration becomes optional rather than structurally required.

Introducing Confidential Coordination

Confidential coordination describes situations where independent actors must produce a shared outcome from private inputs without pooling data or delegating execution authority to a single operator.

To make this shift durable, confidential coordination must be implemented through protocol and distributed enforcement rather than policy alone.

We are building the Interfold to operationalize this model.

The Interfold is a distributed network for confidential coordination. It allows independent parties to produce shared, verifiable outcomes from private inputs without concentrating custody, visibility, and outcome control in a single operator. It is not merely a cryptographic primitive or a hosted execution service.

That distinction matters because encrypted computation under a single operator still preserves a centralized trust boundary around execution. Confidential coordination must be networked by design.

Within the Interfold, no single ciphernode can unilaterally expose private inputs, control decryption, alter defined computation logic, or determine outcomes. Verification is collective, and threshold cryptography separates control over computation, key material, and outcome release across independently constrained participants. Breaking confidentiality would require coordinated compromise across a threshold, not failure at a single point.

Confidential coordination is not a feature layered onto existing systems. It is a coordination layer in which privacy is embedded in execution rather than relegated to access control.

In systems that mediate markets, medicine, and collective risk, that distinction has systemic consequences. It changes how informational advantage is distributed, redistributes authority over execution, and makes new forms of cross-institutional collaboration legally and technically viable.

Privacy here is not primarily concealment. It is leverage preserved within coordination. And when custodial visibility is no longer built into execution, informational privilege no longer needs to be structural.

Institutions can reorganize around new guarantees.

Markets can function under different visibility assumptions.

Multi-agent systems can operate within a different topology of trust.

To compute together at scale without reproducing old asymmetries, execution cannot rely on custodial visibility as its default condition.

Confidential coordination names the category.

The Interfold is the distributed network designed to implement it.

Next in the series: why confidential coordination requires a network, not just better encryption.

Participate in the Interfold network

A distributed network for confidential coordination.

Run a Genesis Ciphernode: Help operate the network by executing computations without exposing underlying data.

Build on Interfold: Create applications that coordinate across private inputs and produce verifiable outcomes.

Follow the Interfold: Track the network as it evolves, with updates, early use cases, and the emergence of a distributed system in practice.