Confidential Coordination II: Why It Requires a Network

This essay is the second in a series on confidential coordination. It argues that once private inputs must produce a shared outcome, execution authority becomes the central problem, and solving it requires a network rather than a single execution environment.

Many forms of coordination depend on participants contributing private information. Elections rely on secret ballots so citizens can vote without coercion. Markets rely on sealed bids so competitors cannot manipulate prices. Organizations increasingly combine sensitive datasets to make collective decisions.

These are coordination problems where private inputs must be combined to produce a shared outcome. The issue is how to do so without exposing those inputs or concentrating outcome control in the hands of a single operator. This is the problem of confidential coordination.

Traditional privacy systems were designed to protect information: encryption protects messages in transit, access controls restrict who can read records, and secure storage protects data at rest.

But systems that produce shared outcomes operate under a different constraint. If private inputs must be combined to produce a shared result, what governs that process? If no one is meant to see the inputs, who controls how they are combined, how the result is produced, and whether it can be trusted at all?

Aggregation Creates Authority

The problem becomes concrete at the point where private inputs must be combined to produce a shared outcome.

In an election, votes are cast privately so political preferences cannot be surveilled or coerced. Yet even when voting is private, the outcome still depends on a single operation: counting the votes. The process that counts and certifies those votes determines how the outcome is produced.

The same structure appears in sealed-bid auctions, a mechanism widely used across digital markets, procurement, and DeFi. Participants submit bids without revealing them to competitors, but determining the winner requires comparing all bids simultaneously. The authority performing that comparison determines which bidder prevails.

In both cases, confidentiality protects participation, but the outcome emerges only when inputs are aggregated.

These dynamics become especially explicit in digital coordination systems, where the processes that collect, combine, and evaluate inputs are implemented as protocols. As coordination is increasingly mediated by computation, the authority once exercised by institutions becomes embedded in the systems that govern execution.

Aggregation is therefore not merely a technical step. It is the point at which outcomes are fixed and execution authority is exercised.

This has led to the development of cryptographic systems designed to preserve privacy during computation. But while these systems protect inputs, they do not eliminate the role of execution in determining outcomes.

Encryption Does Not Remove Authority

Modern cryptography attempts to reduce reliance on trusted operators by enabling computation over encrypted or otherwise concealed data. Techniques such as secure multiparty computation, zero-knowledge proofs, and fully homomorphic encryption extend what can be computed without revealing inputs.

Yet none of these primitives resolves the concentration of authority at the point of execution. In practice, encryption shifts the problem rather than removes it. Input visibility is reduced, but control over execution becomes the decisive point of authority.

This leaves a more fundamental question: who controls execution once inputs are encrypted?

A system may guarantee, for example, that no one can see any individual bid while still allowing the operator to decide when the auction closes, or whether it closes at all. Even under these conditions, inputs from multiple participants must still be received, processed, and turned into a shared result.

The runtime determines when inputs are accepted, how they are ordered, whether execution completes, and under which conditions outputs are released. In confidential coordination, this includes a more specific question as well: who controls decryption conditions once a shared plaintext result must be produced?

When inputs remain encrypted, these controls still shape outcomes. Encryption protects visibility, but it does not govern execution.

Once computation produces a collective result, authority settles with whatever system is able to govern that runtime, including the conditions under which encrypted state becomes a public outcome.

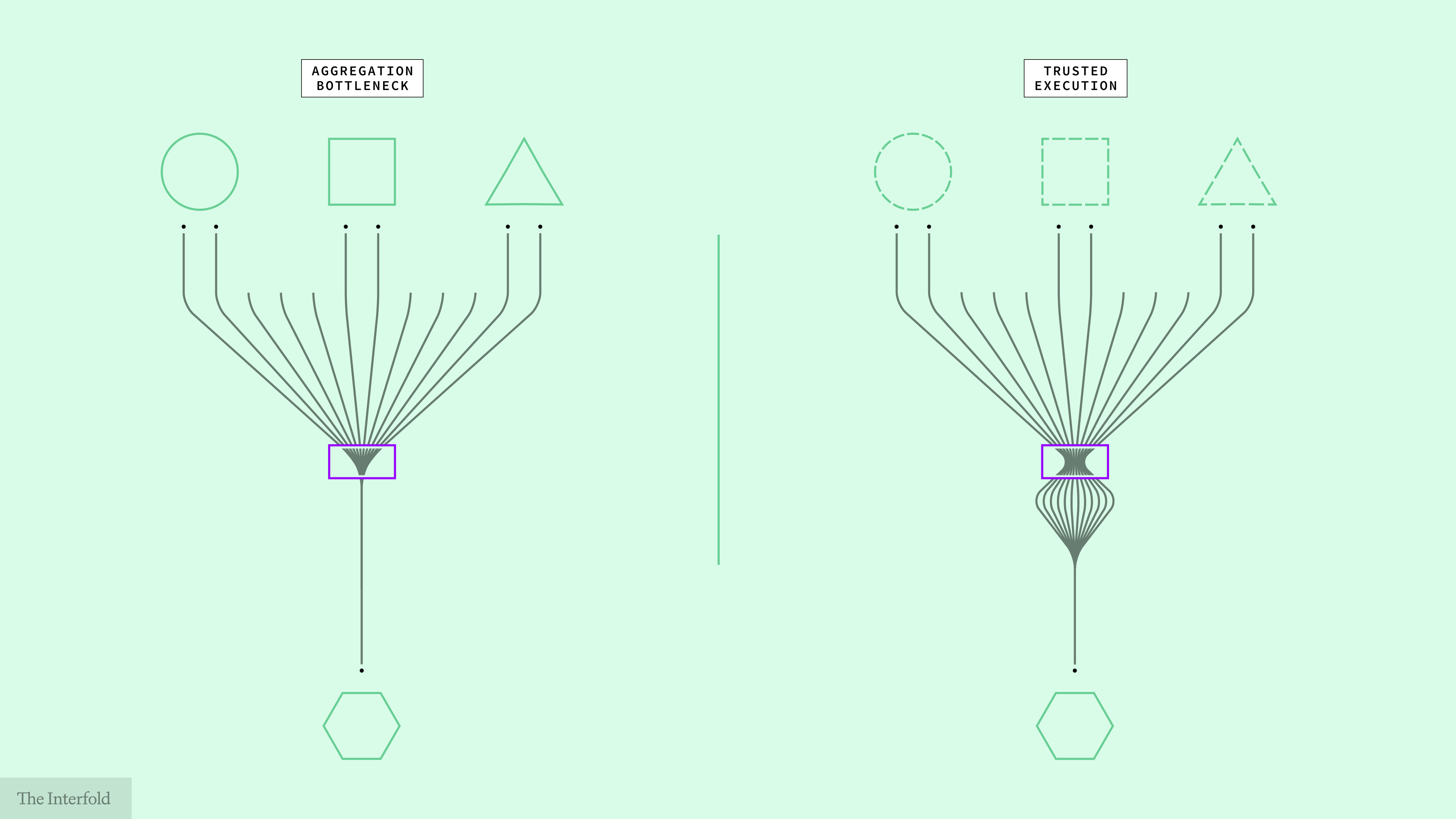

Trusted Hardware Relocates Authority

Recognizing this problem, many systems attempt to minimize trust in operators by pushing execution into protected hardware.

Trusted execution environments (TEEs) and hardware security modules (HSMs) follow this model. Computations run inside specialized processors that isolate memory and prevent external observers from accessing internal state. Even the operator of the host machine cannot directly observe the data once execution begins.

While these environments significantly strengthen confidentiality, they do not eliminate execution authority.

The computation still occurs inside a single machine, a centralized execution environment that is governed by a hardware and firmware supply chain. Participants must trust the manufacturer, firmware, and attestation systems that certify execution.

The machine may be hardened, but execution authority remains singular: one runtime governs completion, failure, and output release.

This is not a hypothetical concern. TEEs have repeatedly been shown to be vulnerable to side-channel attacks, implementation flaws, and compromises that expose the very execution they are designed to secure.

In these protected environments, authority cannot be removed, only displaced.

The Execution Constraint

Taken together, these observations make the constraint explicit.

In the first essay, confidential coordination was defined by three requirements: private inputs, a shared outcome, and verifiable execution. Each is necessary. But none eliminate execution authority. They only constrain it.

As long as execution is governed by a centralized environment, that system determines how outcomes are formed: when inputs are accepted, how they are ordered, whether execution completes, and under what conditions results are released. Even when inputs remain encrypted, this control reintroduces authority at the point where outcomes are produced.

This is not a moral tradeoff but a structural one: concentrating control improves liveness, while distributing it improves resistance to overreach. The problem is how to avoid buying one by surrendering the other.

Confidentiality protects inputs, and verifiability allows the process to be checked. But neither determines who controls execution while it runs.

The constraint is therefore not privacy alone, but execution authority itself, including who governs completion, output release, and the conditions under which encrypted state becomes a public result. Every claim to neutrality is downstream of that fact.

As long as that authority remains centralized, confidential coordination collapses back into a system of controlled execution.

Execution Must Be Distributed

Resolving this constraint requires distributing execution authority.

Confidential coordination cannot rely on a single executor without reintroducing the very structure it aims to avoid. If one runtime governs the process, authority remains singular, and custody reappears at the point where outcomes are formed.

Distributing execution authority is not simply a matter of running computation across multiple machines. A system is only distributed in a meaningful sense if no single actor, or coordinated subset of actors, can unilaterally determine outcomes.

This is a structural requirement, not a choice.

The Interfold is designed as a network for this reason.

In the Interfold, execution authority is divided across independent ciphernode operators and enforced through threshold participation, such that no single actor can unilaterally control outcomes. Results are produced only when defined network conditions are met.

A network is not an implementation choice added on top of confidential coordination. It is the structural embodiment of the category.

What follows is a different class of system: not private computation hosted by a neutral intermediary, but confidential coordination enforced through distributed threshold authority.

Next in the series: how execution is structured across a network to produce shared outcomes from private inputs.

Participate in the Interfold network

A distributed network for confidential coordination.

Run a Genesis Ciphernode: Help operate the network by executing computations without exposing underlying data.

Build on Interfold: Create applications that coordinate across private inputs and produce verifiable outcomes.

Follow the Interfold: Track the network as it evolves, with updates, early use cases, and the emergence of a distributed system in practice.