Confidential Coordination III: How Execution Becomes Distributed

This essay is the third in a series on confidential coordination. It explains how execution is structured across a distributed network so encrypted inputs can produce shared, verifiable outcomes without any single operator controlling the process.

This is the third essay in a series on confidential coordination: how private inputs can produce a shared, verifiable result without requiring third-party custody, data exposure, or a trusted intermediary.

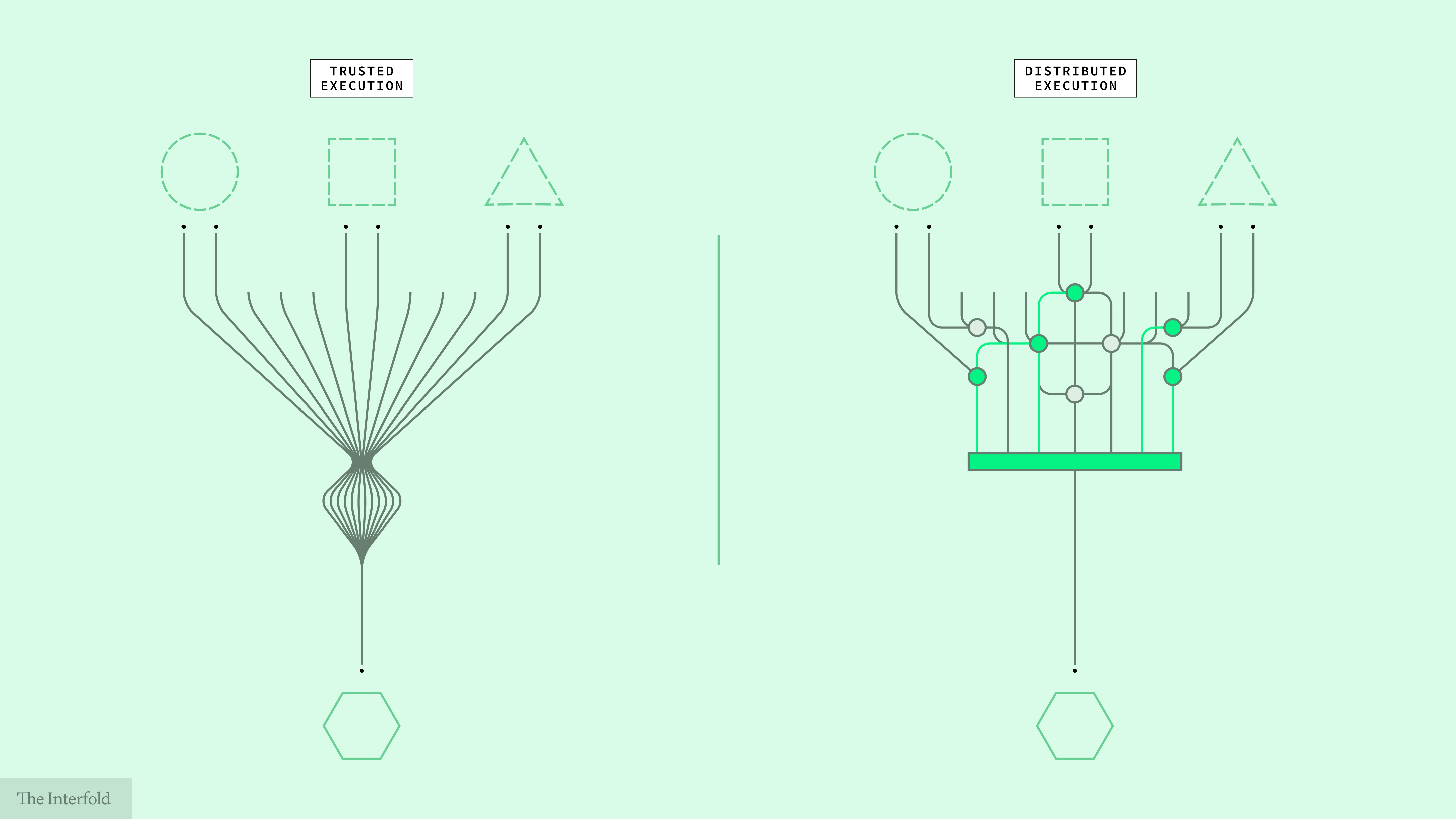

The first essay argued that confidential coordination cannot be reduced to a question of access or data protection alone: if private inputs are to produce a shared result, privacy has to extend into execution itself. The second essay argued that confidential coordination requires a network, because if one operator or environment still governs how outcomes are formed, the coordination trilemma returns, and confidentiality, aggregation, and verifiability once again depend on a single point of control.

This essay turns to the next question: if execution must be distributed, what instantiates it, and what enforces it?

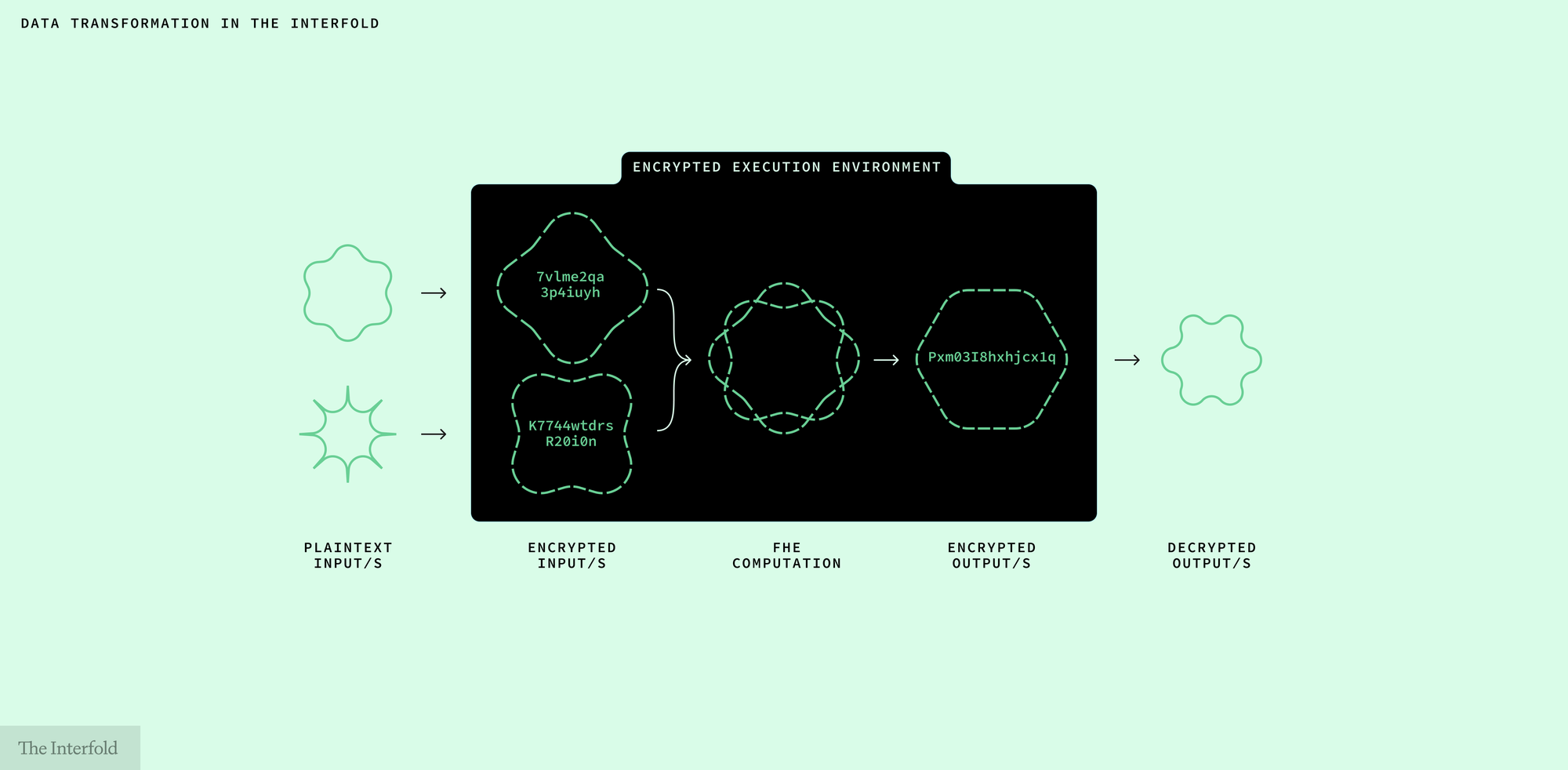

The Interfold answers this through Encrypted Execution Environments, or E3s: bounded execution surfaces instantiated for a specific computation and enforced by a network of ciphernodes.

What follows is how a sealed-bid auction, a secret ballot, or another confidential multi-party computation can be carried through without allowing execution authority to settle back into one environment or operator.

What Distributed Execution Must Do

Distributing execution authority does not remove the need for execution itself. A shared result still has to be produced. Private inputs must produce a legitimate output, one that can be independently verified as the result of the agreed computation rather than accepted as the unsupported claim of a single party.

A system that produces a shared result from private inputs must therefore ensure:

- inputs can be submitted without exposure

- many inputs can be brought into a single computation

- valid inputs cannot be omitted from the computation

- execution follows defined program logic

- the result can be attributed to a defined computation and verified as having followed its rules

- no single party controls input inclusion, execution, or outcome release

Distributed execution cannot mean merely scattering work across participants. It still has to provide a coherent way for many private inputs to become one authoritative result.

The question is not only who computes, but what governs each phase of the computation.

How the Interfold Structures Execution

The Interfold addresses these requirements by separating execution, enforcement, and release across distinct roles, each constrained by the others.

- Execution carries out the defined program.

- Enforcement governs the conditions under which that program can proceed and complete.

- Release governs when the permitted result can become available.

No single component can complete the computation alone, and no single point quietly accumulates authority over time.

E3s: where execution occurs

In the Interfold, the execution surface takes the form of an E3 (Encrypted Execution Environment). An E3 is not a single machine or product category. It is a bounded execution surface instantiated for one computation: a tally, a sealed-bid process, or another multi-party computation. Different underlying compute systems may realize that surface depending on the job.

An E3 is not a standing environment waiting to absorb inputs under the continuing discretion of a single operator. It is created for one computation, under one set of conditions, and for one bounded duration. It brings encrypted inputs from multiple participants into a single bounded computation, executes defined program logic over them, produces a verifiable result under the system’s defined conditions, and closes once that computation is complete.

That boundedness matters because a permanent execution environment accumulates authority simply by remaining in place. It governs repeated access, retains state across interactions, and defines the standing conditions under which future outcomes are formed. Over time, control over that environment becomes control over which inputs are admitted, when execution occurs, and when outcomes are recognized as valid.

More critically, persistent encrypted state held under the control of a single authority increases the blast radius of compromise over time. The longer that authority governs accumulated state, the more is exposed if it fails.

An E3 is structured to prevent that accumulation through its ephemerality. The secret state associated with that E3 is isolated from every other E3, and its proofs, outputs, and posted events remain available for verification.

Ciphernodes and committees: how enforcement is distributed

An E3 does not secure itself. If bounded execution surfaces existed without distributed enforcement, authority would simply reappear elsewhere in the system, shifting from the execution environment to whatever operator governed its conditions.

In the Interfold, that enforcement layer is carried by ciphernodes. Ciphernodes are independent network operators responsible for threshold duties around key processes, execution conditions, and outcome release under protocol-defined constraints.

For each computation, a subset of ciphernodes is organized into a committee through a random selection process governed by the network rather than handpicked by a central operator. Authority, then, is distributed from the outset. No central operator appoints the committee after the fact, and no single committee member can determine input inclusion, completion, or release on its own.

Key generation is shared, outcome release is threshold-controlled, and the result can only be produced under those distributed conditions. A system is not meaningfully distributed simply because many machines are involved. It is distributed when no single party can govern the runtime or determine outcome release alone.

This is where the distinction between production and release matters. A result may be produced in execution, but it only becomes a released result under the committee’s threshold conditions.

Execution, enforcement, and release are therefore separated across roles rather than collapsed into one environment or operator.

How Private Inputs Become a Shared Outcome

The Interfold combines two elements: E3s, which define a bounded execution surface, and ciphernodes, which enforce the conditions under which that surface operates. Together, they structure how private inputs can produce a shared outcome without being exposed or entrusted to a single operator.

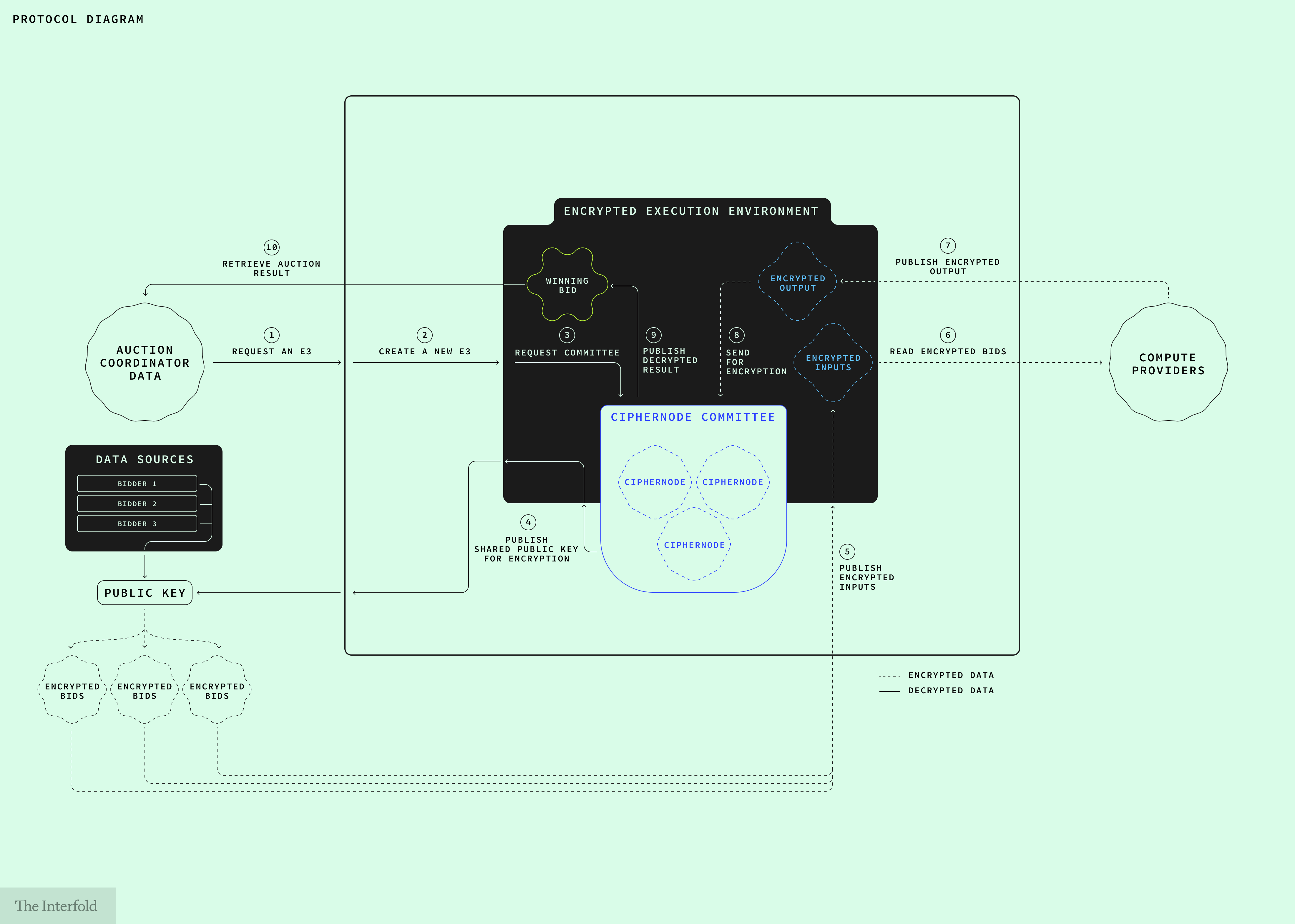

Consider a sealed-bid auction. Multiple bidders submit private bids, those bids are evaluated under defined rules, and a single result is produced without exposing the bids themselves. The same sequence applies to secret ballots, cross-institutional risk models, and confidential medical or financial analysis. What changes is the program being executed, not the structure of execution itself.

Execution, in this setting, is not a single event but a sequence carried out across different parties under defined conditions. The diagram below traces that sequence through the example of a sealed-bid auction.

Read from start to end, the sequence moves from auction request, to committee formation and encrypted submission, to execution, verification, and release.

- Request an E3: An auction coordinator requests an E3 to run the auction under defined rules and conditions.

- Create a new E3: The network instantiates an execution environment for that specific auction.

- Request committee: A committee of ciphernodes is formed through the network's random selection process to coordinate threshold setup and enforcement.

- Publish shared public key: The committee performs threshold key generation and publishes a shared public key for encrypted bid submission.

- Publish encrypted inputs: Bidders submit encrypted bids using the shared key. Inputs are never exposed in plaintext.

- Read encrypted bids: A selected compute provider (e.g. a zkVM system such as RISC Zero) reads the encrypted bids for execution, without access to their contents.

- Publish encrypted output: Execution produces an encrypted result – the winning bid or settlement price – and a verifiable claim that the computation followed the defined program.

- Prepare result for release: The encrypted result proceeds to the threshold release phase, where verification conditions are checked prior to decryption.

- Publish decrypted result: The result is decrypted under threshold conditions, with no single party able to control release.

- Retrieve auction result: The coordinator retrieves the final outcome. Losing bids remain private.

Once the permitted auction result has been released, the committee’s role in that E3 comes to an end. What remains are the outcome and the proof that it was produced under the defined conditions, not a standing environment that continues to accumulate authority.

Why Authority Remains Distributed

The distinction above is structural. The question now is whether that structure actually prevents authority from reconcentrating: If the Interfold still requires a defined execution surface, a compute provider, and a committee of ciphernodes, why does authority not simply reappear inside that arrangement in another form?

The answer is architectural. Submission, execution, verification, and release are distributed across distinct roles and phases. No single party holds the full chain of authority from private input to shared result. What is distributed is not just work, but the ability to determine when and how a result becomes legitimate.

No single party can access the underlying secrets, execute the computation at its own discretion, determine completion on its own terms, and then release the result unilaterally. Each role is partial:

- The compute provider executes the program, but does not govern release.

- Ciphernodes enforce threshold conditions, but no individual committee member holds enough material to expose protected inputs or complete the computation alone.

- The E3 provides the execution surface, but does not determine its own legitimacy.

A computation can be spread across many machines and still remain governed by one operator. The Interfold is structured differently. Its components are arranged so that no single operator can control the full path from private input to shared result. Authority does not disappear. It is divided in such a way that it can only be exercised collectively.

How This Structure Is Enforced

An architecture of separated roles does not enforce itself. The Interfold enforces this structure through a composition of cryptographic and economic constraints.

Confidentiality through execution

The first requirement is that private inputs remain private throughout the computation. If execution required exposing them to a single environment, the architecture would collapse back into custodial execution at its most basic layer.

In the Interfold, inputs remain encrypted during execution rather than merely before it. It uses fully homomorphic encryption (FHE) and multi-party computation (MPC) to allow computation to proceed over protected inputs rather than requiring them to be pooled into a shared plaintext environment. Confidentiality is preserved not only in transit or at rest, but through execution itself.

Correctness through verification

Encryption alone is not enough. A result must still be shown to have been produced by the defined program rather than by discretionary intervention. Zero-knowledge proof systems enable verifiably correct execution without revealing the underlying inputs. Verification constrains the execution path rather than leaving correctness to a trusted party.

Release through threshold control

Even a verified computation is not sufficient if the power to release its result remains singular. Threshold cryptography distributes control over decryption and release across committees of ciphernodes, ensuring that no single operator can unilaterally expose or finalize the result.

Durability through incentives

The Interfold does not rely on cryptography alone. Its enforcement model also uses staking, rewards, and penalties to reinforce the bounded roles within the system.

This matters because distribution must remain durable under real conditions. Participants may fail, collude, or attempt to exploit their position. Economic constraints do not replace the cryptographic structure. They strengthen it by attaching consequences to the misuse of partial authority.

These layers matter in combination. Encryption preserves confidentiality through execution. Verification constrains correctness. Threshold control constrains outcome release. Economic constraints reinforce the durability of those separations. Together, they keep execution from collapsing back into a single point of control.

What This Structure Guarantees

What this structure guarantees is not privacy alone, but a different condition of execution: one in which private inputs remain confidential throughout the computation, the defined program runs under constrained conditions, the outcome can be verified, and authority over completion and release stays distributed.

These guarantees are inseparable. Confidentiality without correct execution leaves the computation opaque. Correct execution without verifiability leaves the result dependent on authority. Verifiability without distributed authority leaves control concentrated at the exact point where outcomes are formed.

This is the coordination trilemma introduced earlier in the series. The Interfold resolves it at the level of execution itself. Execution no longer has to be the place where authority concentrates and risk accumulates. It becomes a bounded, distributed process through which many private inputs produce one verifiable result, and where the legitimacy of that result is structural rather than claimed.

The next question is what this makes possible.

Next in the series: how confidential coordination becomes usable in practice through concrete applications and system surfaces.

Participate in the Interfold network

A distributed network for confidential coordination.

Run a ciphernode: Learn how operators participate in threshold enforcement and outcome release.

Build on Interfold: Create applications that coordinate across private inputs and produce verifiable outcomes.

Follow the Interfold: Track the network as it evolves, with updates, early use cases, and the emergence of a distributed system in practice.